Insights

The AI Production Paradox: What 2,527 enterprise leaders told us

For two years, the conversation in enterprise AI has been the same. Getting pilots into production is the bottleneck. Break through that, and you’re ahead.

Our new research says that story is wrong – and the data to back it up comes from 2,527 enterprise decision-makers across 10 countries and six industries. Today, we’re releasing early findings from The AI Production Paradox, an independent Sinch study on the real state of AI in customer communications.

Here’s what we found.

Most enterprises have already crossed the finish line

There’s a widely accepted story in enterprise AI that the biggest challenge is getting pilots into production. McKinsey reported in 2025 that two-thirds of organizations remained stuck in experimentation phases. BCG found that 60% had yet to show any material value from their AI investments. Gartner forecast that half of all generative AI projects would be abandoned after proof of concept.

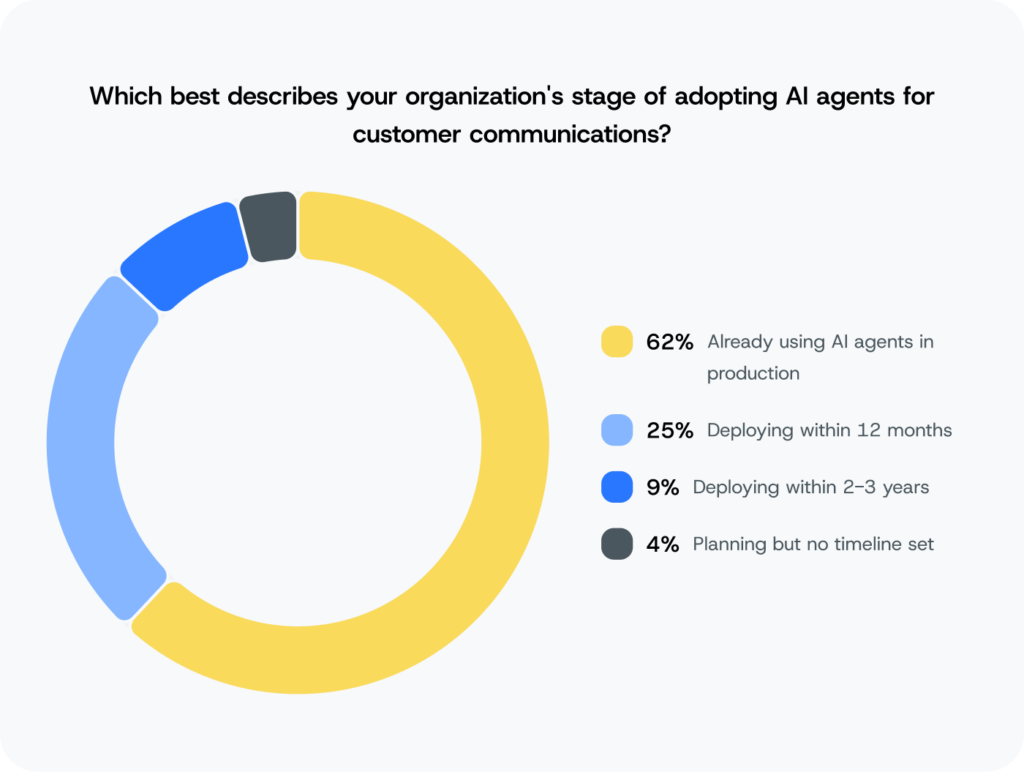

In customer communications specifically, something different happened. Our research shows 62% of organizations already have AI agents live in production across customer channels, and 88% expect to be there by the end of 2026. That’s nearly nine in ten businesses actively deploying AI agents by the end of this year.

Enterprises aren’t dipping a toe in either. The average deployment spans 3.3 channels simultaneously, with nearly half running AI across four or more – web chatbots, email, social media, WhatsApp, SMS/MMS, RCS, and voice and interactive voice responses. And the goal driving most of that investment isn’t cost reduction. For 36% of respondents, the primary objective is improving customer satisfaction and loyalty. They’re using AI to compete on customer experience, and to earn something harder to measure than efficiency: customer trust.

By every metric the market established to measure AI progress, these organizations won. They escaped pilot purgatory. They crossed the finish line.

Except that wasn’t the finish line.

Production is where the real story starts

Here’s the finding that should make every AI communications leader stop and read more carefully: 74% of organizations that successfully deployed AI communications agents have been forced to shut them down or roll them back.

That holds across every region and every industry in our study. It doesn’t decline with experience. It doesn’t decline with investment. All along, the market has been drawing the wrong finish line – and what happens after enterprises successfully ship radically changes the question every AI communications leader should be asking right now.

More governance doesn’t mean fewer failures

Here’s where it gets interesting. Among organizations that describe their guardrails as fully mature – the most governed, most monitored AI programs in the survey – the rollback rate is 81%.

More governance, more monitoring, more investment – and still, eight in ten of the most advanced programs have had to shut something down.

Average rollback rate among organizations that reached production. (Sinch, 2026)

Rollback rate among organizations with fully mature guardrails. (Sinch, 2026)

The data offers a worrying explanation. Organizations with mature governance instrumentation can see failures that less mature organizations miss entirely. The programs reporting lower rollback rates aren’t necessarily running cleaner AI – in many cases, they simply lack the monitoring to know when something goes wrong. The organizations reporting no governance failures are not the benchmark. They may just be the ones with the least visibility into what’s happening.

“If governance was the fix, the most mature teams would roll back less. They don’t. What’s breaking isn’t the policy layer. It’s reliability in the real system: data, workflows, integrations, and edge cases. And the cost is real: 84% of AI engineering teams spend at least half their time on safety infrastructure instead of improving the customer experience. That’s the guardrail tax.”

And then there’s the confidence problem. 90% of enterprise decision-makers describe themselves as confident in their AI agent readiness. Of those already in production, 75% have experienced at least one governance rollback. Confidence doesn’t correlate with fewer failures. In many cases, it’s the precise condition under which the next failure is being prepared.

The more useful question for any leadership team isn’t “are we confident?” It’s “if something went wrong right now, would we know before our customers did?”

When an AI agent fails, three things break at once

When an AI communications agent fails in production, customers notice. Our data shows the impact splits in three directions simultaneously – and most organizations are only tracking the first.

The support queue

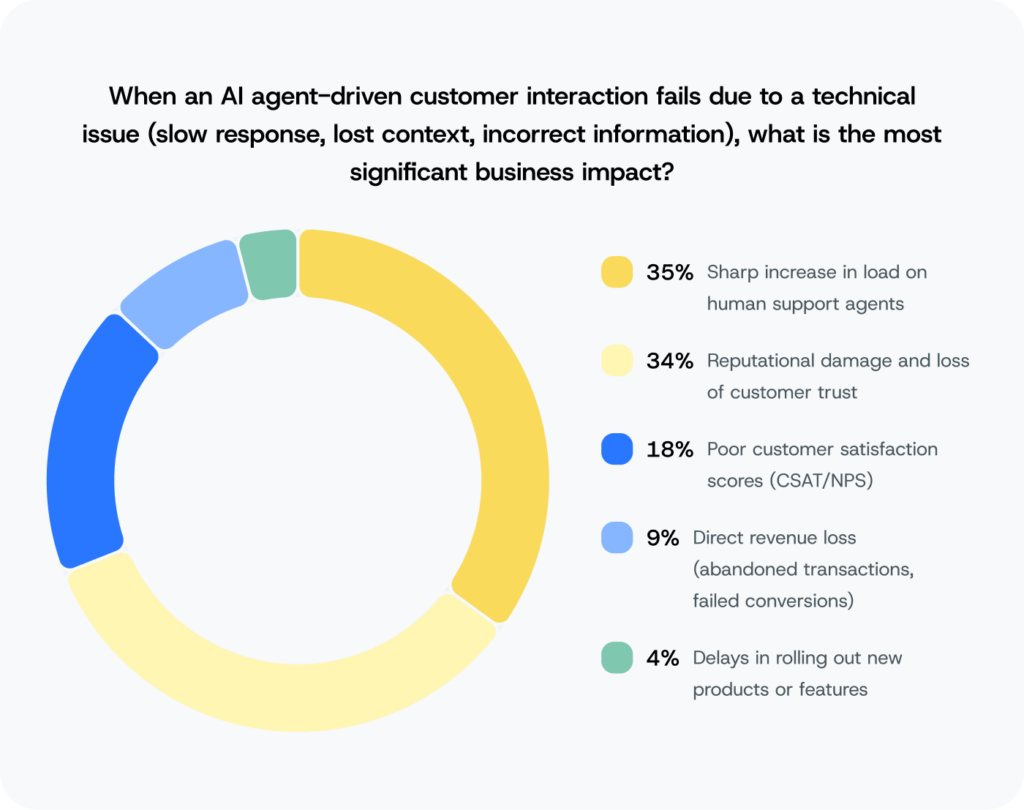

35% of organizations cite a surge in human support agent load as the primary consequence. The agent goes down, and every interaction it was handling reverts to a human. A support team sized for a world where AI handles significant volume is suddenly managing all of it. At peak moments – a product launch, a service outage, a seasonal spike – that’s not an inconvenience. It’s an operational crisis.

This is the failure mode that gets reported upward. It shows up in dashboards, generates incident reviews, and resolves when the agent comes back online. It’s visible, it’s measurable, and it has a clear path to resolution.

The brand

34% cite reputational damage and loss of customer trust – essentially tied with support overload. That near-tie is one of the most underreported findings in the survey, because these two failure modes don’t resolve the same way. The support queue clears. Brand damage doesn’t have a clear path back.

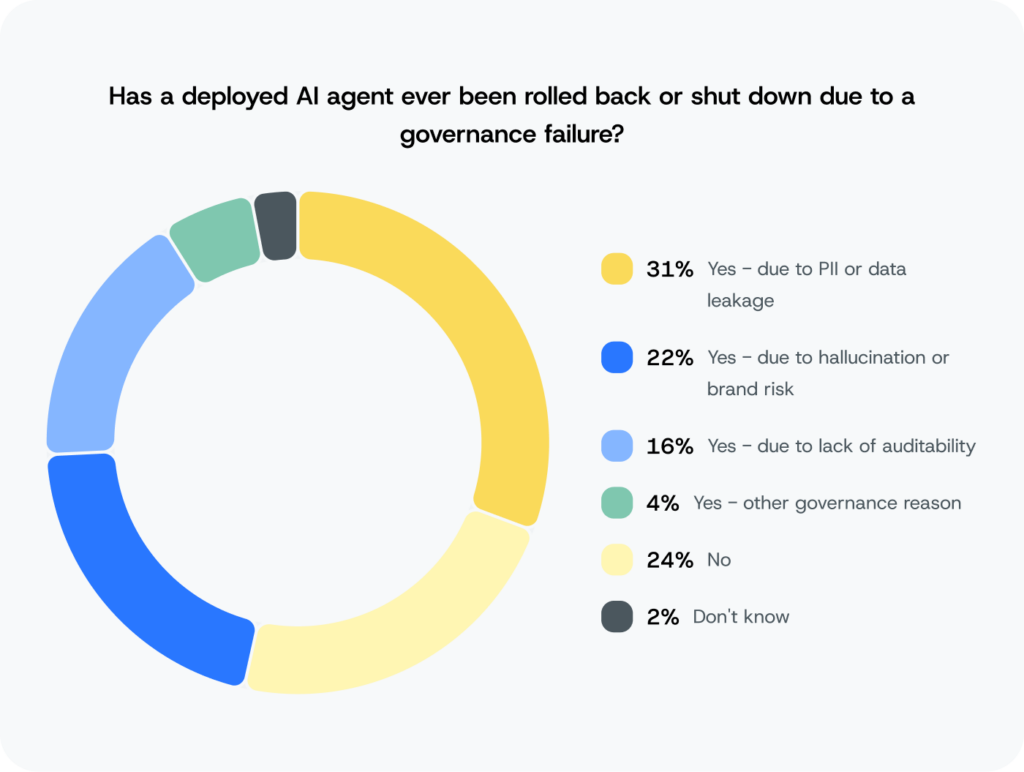

From the customer’s perspective, there’s no platform, no vendor, no infrastructure layer. There’s only your brand. For 31% of organizations, the leading cause of a governance failure rollback is customer data exposure: personal information surfacing in an interaction where it shouldn’t have. That attribution is permanent in a way that a queue spike is not.

What makes this harder to address is that it often isn’t visible to the people who could act on it. Technical leaders report rollbacks at a higher rate than their business counterparts at the same organizations – 77% versus 69%. In retail, C-suite executives are 2.3x more likely than their VPs and Directors to say most AI communications pilots are succeeding. Same organization, very different accounts of the same events. That visibility gap is where the brand takes the hit.

The engineering backlog

There’s a third cost that appears in neither the dashboard nor the customer complaint. Sinch data shows 84% of AI communications engineering teams spend at least half their time building guardrails and safety controls – instead of building the next customer experience. 35% spend most of their time there.

And the direction of that burden surprises people. Production-stage engineering teams are spending more time on safety infrastructure than pre-production teams, not less. Each new agent, each new channel, each new compliance requirement adds another layer. The guardrail tax doesn’t amortize. It compounds.

“Every team needs to decide what controls belong at the platform layer and what their engineers should build on top, because the cost of building custom guardrails compounds over time, especially as the team moves through the product lifecycle. Each new agent, each new channel, each new deployment adds to the pile. And eventually you lose that momentum when it comes to outperforming on the market.”

The infrastructure gap is the actual story

Across every statistical method we applied to this dataset – correlations, regression models, cross-tabulations – one variable consistently outperforms all others as a predictor of AI deployment success: communications infrastructure satisfaction.

Not investment level. Not AI maturity. Not how long you’ve been in production. Not how sophisticated your safety policies are.

The correlation between infrastructure satisfaction and AI deployment confidence is 0.52 – the strongest relationship across 4,656 variable pairs analyzed in the study. How an organization feels about its communications infrastructure is a better predictor of AI success than anything else we measured.

Yet most organizations identify at least one significant shortcoming in their current provider. The most common gaps: insufficient reliability for AI at scale (42%), limited multi-channel capability (37%), and lack of AI platform integrations (32%).

And more than half of enterprises (55%) are custom-engineering the ability to preserve customer context when someone moves from one channel to another – from chat to voice, from WhatsApp to a phone call – because their platform doesn’t provide it natively. When a customer has to repeat themselves to an AI agent, they’re not experiencing a model failure. They’re experiencing the infrastructure gap directly. And it’s your brand that pays the price.

of respondents report insufficient reliability for AI at scale from their current provider. (Sinch, 2026)

of respondents cite limited multi-channel capability from their current provider. (Sinch, 2026)

of respondents report a lack of AI platform integrations from their current provider. (Sinch, 2026)

are custom-engineering the ability to preserve customer context when someone moves from one channel to another

The industry has voted with its budgets – trust, security, and compliance is the #1 spending category globally, ahead of AI development itself. But most of that investment is going into application-layer guardrails built by engineering teams, treating symptoms while the infrastructure underneath stays the same. That’s why 74% are still rolling back agents. You can invest heavily in safety and still fail, because the failure modes originate one layer below.

The market is already responding

Enterprises haven’t fully articulated that diagnosis yet, but their behavior suggests they’ve felt it. 86% have had active or exploratory conversations with alternative providers in the past 12 months, and only 4% have no plans to evaluate.

The strongest trigger for switching isn’t vendor dissatisfaction. It’s experience. 91% of enterprises that have had to roll back a live agent have evaluated or are actively evaluating a new communications provider. The most sophisticated buyers are the most active shoppers – not because they’re unhappy with a vendor, but because their AI ambitions have outgrown what the current infrastructure was built to handle.

When companies assess alternatives, reliability ranks first with 29% of respondents placing it at the top, ahead of compliance capability, ease of integration, and – notably – pricing. Pricing ranked eighth out of nine factors in the survey.

What the data is asking

62% of organizations have an AI customer communications agent live. 88% will have one by the end of 2026.

Getting to production was hard, and most enterprises have made it. But the data is clear: escaping pilot purgatory wasn’t the hardest part. The organizations pulling ahead aren’t debating production timelines or investment cases anymore. They’ve deployed, they’re scaling – and what they’ve found on the other side is not what the market expected.

Three questions worth taking into your next leadership review:

- How much of your engineering team’s time is going toward building guardrails from scratch versus building the next customer experience?

- If your AI communications agent failed right now, would you know before your customers did?

- Is your communications infrastructure built for what you’re planning to deploy in the next 12 months, or for what you shipped 18 months ago?

The early findings from The AI Production Paradox are live now. The full report – including regional cuts across Americas, EMEA, and APAC, vertical deep dives, and persona analysis – publishes in June.